Politics

How to Shine a Search Light Through Terabytes of Data to Get to “Tag You Are It” – ReadWrite

Published

3 years agoon

By

Drew Simpson

Have terabytes of data at your fingertips but no ability to find anything? This article lists hard-won tips after many years working for an enterprise and developer search engine software company. While the tips use terminology and the dtSearch® product line — these tips are generally applicable.

How to Shine a Search Light Through Terabytes of Data

Build an Index

The first tip is to use the search engine to build an index instead of simply doing an unindexed search. Unindexed search is slow. Indexed search is typically instantaneous, even for multiple concurrent search requests across terabytes. (As a technical matter, concurrent indexed searches can run from different threads in an online or network environment without affecting each other.)

What is an index?

An index is simply an internal tool that lets the search engine search terabytes in an instant. How do you get such an index? Just point to whatever you want to index, and the search engine will do the rest. It is no problem if you don’t have a clear idea of what is in your data.

The search engine can automatically identify file formats like Microsoft Word, Access, Excel, PowerPoint, and OneNote; email files; PDFs; and web-based formats like HTML or XML.

The search engine can automatically sift through compressed archives like RAR and ZIP to index the files.

But what if some of the PDF files are saved with MS Word file extensions like .DOCX — and some Access files are saved with Excel file extensions, etc.?

This situation is not present a problem. The search engine’s document filters which parse the data, can look inside each file to determine the correct file type without reference to the file extension.

The document filters can also go through files looking for nested documents.

If there is a ZIP or RAR file with an embedded Excel file and embedded in the Excel file is an Access database and a Word file, the document filters will find and parse the embedded documents as well. Note that text that is black on black or white on white or red on red may be invisible when you view a file in that file’s relevant application, but it is just straight-up text for a search engine.

One last pointer within the broader “build an index.” HERE’S A TIP: index email files directly, if possible as PST, OST, MSG, etc. files, without going through Outlook.

The search engine can index Outlook emails through Outlook, but going through Outlook / MAPI will slow down the indexer relative to direct access to these file types.

Check Index Logs

The second tip is to check the index logs. The logs can identify files that the search engine cannot index for whatever reason. A key example is “image only” PDFs.

An ordinary PDF combines text and images. You can tell that you have actual text in a PDF if you can copy and paste a selection of text into another file. But “image only” PDFs are different.

If you try to copy and paste what may look like words from these, that process goes nowhere. But, of course, with no actual text, just pictures, the search engine cannot index and search the contents of such files. (The search engine can still index metadata, but the main event will be missing.)

Here’s the tricky part: “image only” PDFs can occur in data collections along with ordinary PDFs with no external identifiers that these “image only” PDFs are present.

But the indexing log file will flag “image only” PDFs. You can then run these “image only” PDFs through an OCR application such as Adobe Acrobat to turn them into regular PDFs and add these to your index.

Consider Document Caching

The third tip is to consider document caching in your index, where documents or other data are subject to a remote or otherwise unreliable connection or may even be completely unavailable in their original location. A quick explanation of how the search results display works helps explain this tip.

A search engine processes standalone and multithreaded search requests using data from the index itself. To display the full text with highlighted hits, the search engine goes back to the original file or other data to pull up a copy of that item. The search engine then uses the index to determine where the hits should be in that copy and marks those in the search results display.

Highlighted hits are quite literally the light that shines through your data.

If the original file is easily accessible and quick to retrieve, this process is straightforward. However, if the original file is slow to retrieve or simply gone, the display process ceases to be seamless. The answer is to cache or store a full copy of the file or other data along with the index itself. Using that cache, the display process remains smooth and instant even without access to the originals.

The disadvantage to caching is that it makes the size of the index a lot bigger, as the index is now storing the complete text of all files along with the basic index itself. But when the original is slow or unavailable, caching is well worth it.

Update Your Indexes

The next tip is to keep your indexes updated to reflect files that have been added, deleted, or changed. This process is easier than it may seem. To add something new doesn’t require rebuilding an index from scratch. Rather, the search engine can automatically check each file and see if that file has been modified, deleted, or added since the last index build and simply index “the difference.”

A compress option streamlines the extra baggage that can follow multiple index updates.

You can also set automatic index updates via the Windows Task Scheduler at specific times. Importantly, searching, even concurrent searching can continue uninterrupted as an index updates.

Refine Your Search Request

The fifth tip is to pay attention to how you frame a search request. For example, natural language searching lets you enter a “plain English” search request or even copy and paste a paragraph of text and get relevancy-ranked search results.

I use the term “plain English” here to capture the essence of natural language searching. But note that a search engine can work automatically with any of the hundreds of Unicode languages, even right-to-left languages like Hebrew and Arabic, and double-byte languages like Chinese, Japanese and Korean.

Underneath the hood, relevancy ranking works as follows. If you search for purple or blue, and blue is all over your indexed data, but purple references are much rarer, then files with purple will get a higher relevancy ranking. Furthermore, files with denser purple mentions receive an even higher relevancy ranking.

Natural language search requests require little effort to compose; it is often more fruitful to take the time to enter a precision search request instead.

A search engine can also support phrase searching, Boolean and/or/not search requests, proximity searching in one direction (X before Y) or both directions (X before or after Y), concept searching, metadata-specific searching, number, and numeric range searching, date and data range searching, and much more.

Use these different options to refine your search requests to get exactly what you are looking for. Also, don’t forget about the more specialized search options, like the ability to identify credit card numbers in data, generating and searching for file hash values, positive and negative variable term weighting including in specific metadata, etc.

One specific search option that you may want to use as an add-on to both natural language and structured search requests is fuzzy searching. Fuzzy searching looks for minor typographical deviations that can crop up in emails and in OCR text. So, for example, a search for purple would also pick up purple with a low-level of fuzzy search to make sure that you find what you are looking for, even with slight misspellings.

A final point regarding search requests: you are not stuck with your default sorting option.

If you have natural language searching as the default sorting option, you can click to immediately change that to sort by ascending or descending file date, ascending or descending file size, the presence of keywords in specific metadata, etc. All of these options add a different window into search results and retrieve items.

Tag Relevant Files

The sixth search tip is once you find what you are looking for, you can tag the critical files you need and copy them.

You can even copy select files from inside a larger email archive or a compressed ZIP or RAR-type archive (no separate “un-ZIP” required). You can also tell the search engine to prepare a search report showing all hits with as much context around each hit as you want.

Search reports can work across all retrieved files, or you can tag the files to include in a search report and limit the search report to just these.

These tips will help shine a light through terabytes of data, whether the data you are working with is your own or from a third-party where you’ve never seen the dataset before.

Image Credit: thirdman; pexels; thank you!

Elizabeth Thede

Elizabeth is director of sales at dtSearch. An attorney by training, Elizabeth has spent many years in the software industry. At home, she grows a lot of plants, and has a poorly behaved but very cute rescue dog. Elizabeth also writes technical articles and is a regular contributor to The Price of Business Nationally Syndicated by USA Business Radio, with current articles on the USA Daily Times and The Daily Blaze.

You may like

-

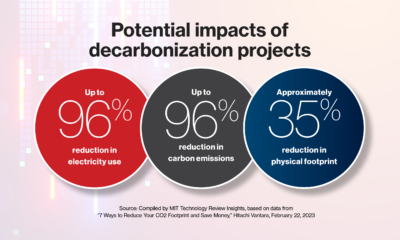

Sustainability starts with the data center

-

Meta is giving researchers more access to Facebook and Instagram data

-

The Download: are we alone, and private military data for sale

-

It’s shockingly easy to buy sensitive data about US military personnel

-

The Download: military personnel data for sale, and AI watermarking

-

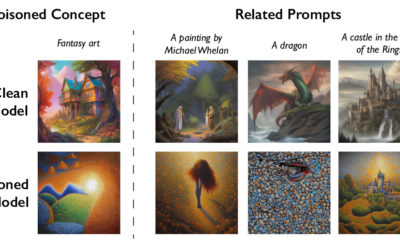

This new data poisoning tool lets artists fight back against generative AI

Politics

Fintech Kennek raises $12.5M seed round to digitize lending

Published

7 months agoon

10/11/2023By

Drew Simpson

London-based fintech startup Kennek has raised $12.5 million in seed funding to expand its lending operating system.

According to an Oct. 10 tech.eu report, the round was led by HV Capital and included participation from Dutch Founders Fund, AlbionVC, FFVC, Plug & Play Ventures, and Syndicate One. Kennek offers software-as-a-service tools to help non-bank lenders streamline their operations using open banking, open finance, and payments.

The platform aims to automate time-consuming manual tasks and consolidate fragmented data to simplify lending. Xavier De Pauw, founder of Kennek said:

“Until kennek, lenders had to devote countless hours to menial operational tasks and deal with jumbled and hard-coded data – which makes every other part of lending a headache. As former lenders ourselves, we lived and breathed these frustrations, and built kennek to make them a thing of the past.”

The company said the latest funding round was oversubscribed and closed quickly despite the challenging fundraising environment. The new capital will be used to expand Kennek’s engineering team and strengthen its market position in the UK while exploring expansion into other European markets. Barbod Namini, Partner at lead investor HV Capital, commented on the investment:

“Kennek has developed an ambitious and genuinely unique proposition which we think can be the foundation of the entire alternative lending space. […] It is a complicated market and a solution that brings together all information and stakeholders onto a single platform is highly compelling for both lenders & the ecosystem as a whole.”

The fintech lending space has grown rapidly in recent years, but many lenders still rely on legacy systems and manual processes that limit efficiency and scalability. Kennek aims to leverage open banking and data integration to provide lenders with a more streamlined, automated lending experience.

The seed funding will allow the London-based startup to continue developing its platform and expanding its team to meet demand from non-bank lenders looking to digitize operations. Kennek’s focus on the UK and Europe also comes amid rising adoption of open banking and open finance in the regions.

Featured Image Credit: Photo from Kennek.io; Thank you!

Radek Zielinski

Radek Zielinski is an experienced technology and financial journalist with a passion for cybersecurity and futurology.

Politics

Fortune 500’s race for generative AI breakthroughs

Published

7 months agoon

10/11/2023By

Drew Simpson

As excitement around generative AI grows, Fortune 500 companies, including Goldman Sachs, are carefully examining the possible applications of this technology. A recent survey of U.S. executives indicated that 60% believe generative AI will substantially impact their businesses in the long term. However, they anticipate a one to two-year timeframe before implementing their initial solutions. This optimism stems from the potential of generative AI to revolutionize various aspects of businesses, from enhancing customer experiences to optimizing internal processes. In the short term, companies will likely focus on pilot projects and experimentation, gradually integrating generative AI into their operations as they witness its positive influence on efficiency and profitability.

Goldman Sachs’ Cautious Approach to Implementing Generative AI

In a recent interview, Goldman Sachs CIO Marco Argenti revealed that the firm has not yet implemented any generative AI use cases. Instead, the company focuses on experimentation and setting high standards before adopting the technology. Argenti recognized the desire for outcomes in areas like developer and operational efficiency but emphasized ensuring precision before putting experimental AI use cases into production.

According to Argenti, striking the right balance between driving innovation and maintaining accuracy is crucial for successfully integrating generative AI within the firm. Goldman Sachs intends to continue exploring this emerging technology’s potential benefits and applications while diligently assessing risks to ensure it meets the company’s stringent quality standards.

One possible application for Goldman Sachs is in software development, where the company has observed a 20-40% productivity increase during its trials. The goal is for 1,000 developers to utilize generative AI tools by year’s end. However, Argenti emphasized that a well-defined expectation of return on investment is necessary before fully integrating generative AI into production.

To achieve this, the company plans to implement a systematic and strategic approach to adopting generative AI, ensuring that it complements and enhances the skills of its developers. Additionally, Goldman Sachs intends to evaluate the long-term impact of generative AI on their software development processes and the overall quality of the applications being developed.

Goldman Sachs’ approach to AI implementation goes beyond merely executing models. The firm has created a platform encompassing technical, legal, and compliance assessments to filter out improper content and keep track of all interactions. This comprehensive system ensures seamless integration of artificial intelligence in operations while adhering to regulatory standards and maintaining client confidentiality. Moreover, the platform continuously improves and adapts its algorithms, allowing Goldman Sachs to stay at the forefront of technology and offer its clients the most efficient and secure services.

Featured Image Credit: Photo by Google DeepMind; Pexels; Thank you!

Deanna Ritchie

Managing Editor at ReadWrite

Deanna is the Managing Editor at ReadWrite. Previously she worked as the Editor in Chief for Startup Grind and has over 20+ years of experience in content management and content development.

Politics

UK seizes web3 opportunity simplifying crypto regulations

Published

7 months agoon

10/10/2023By

Drew Simpson

As Web3 companies increasingly consider leaving the United States due to regulatory ambiguity, the United Kingdom must simplify its cryptocurrency regulations to attract these businesses. The conservative think tank Policy Exchange recently released a report detailing ten suggestions for improving Web3 regulation in the country. Among the recommendations are reducing liability for token holders in decentralized autonomous organizations (DAOs) and encouraging the Financial Conduct Authority (FCA) to adopt alternative Know Your Customer (KYC) methodologies, such as digital identities and blockchain analytics tools. These suggestions aim to position the UK as a hub for Web3 innovation and attract blockchain-based businesses looking for a more conducive regulatory environment.

Streamlining Cryptocurrency Regulations for Innovation

To make it easier for emerging Web3 companies to navigate existing legal frameworks and contribute to the UK’s digital economy growth, the government must streamline cryptocurrency regulations and adopt forward-looking approaches. By making the regulatory landscape clear and straightforward, the UK can create an environment that fosters innovation, growth, and competitiveness in the global fintech industry.

The Policy Exchange report also recommends not weakening self-hosted wallets or treating proof-of-stake (PoS) services as financial services. This approach aims to protect the fundamental principles of decentralization and user autonomy while strongly emphasizing security and regulatory compliance. By doing so, the UK can nurture an environment that encourages innovation and the continued growth of blockchain technology.

Despite recent strict measures by UK authorities, such as His Majesty’s Treasury and the FCA, toward the digital assets sector, the proposed changes in the Policy Exchange report strive to make the UK a more attractive location for Web3 enterprises. By adopting these suggestions, the UK can demonstrate its commitment to fostering innovation in the rapidly evolving blockchain and cryptocurrency industries while ensuring a robust and transparent regulatory environment.

The ongoing uncertainty surrounding cryptocurrency regulations in various countries has prompted Web3 companies to explore alternative jurisdictions with more precise legal frameworks. As the United States grapples with regulatory ambiguity, the United Kingdom can position itself as a hub for Web3 innovation by simplifying and streamlining its cryptocurrency regulations.

Featured Image Credit: Photo by Jonathan Borba; Pexels; Thank you!

Deanna Ritchie

Managing Editor at ReadWrite

Deanna is the Managing Editor at ReadWrite. Previously she worked as the Editor in Chief for Startup Grind and has over 20+ years of experience in content management and content development.